This year at Amazon’s re:Invent conference, Rekognition premiered as a new service. It is a “deep-learning AI” service that performs image recognition on pictures you show it. It provides labels of objects and concepts it finds in the image. It can also do facial recognition.

Hitting the Rekognition API is only a few lines of Ruby code. Uploading images to S3 (one of the ways you can pass an image to Rekognizer) is similarly a few lines of code. That makes it easy to fling images at the service.

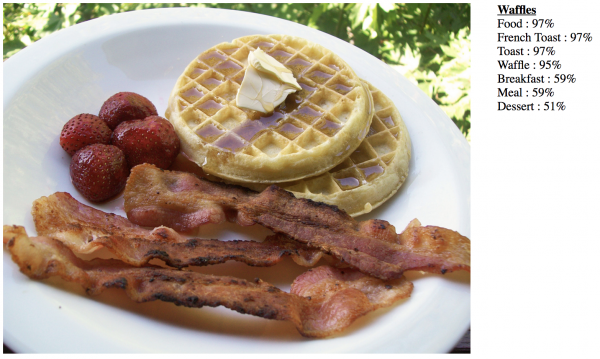

I quickly ran out of interesting images on my laptop, so moved on to taking the results of Google Image Search and feeding those into Rekognition. After a few rounds of this, I found my subconscious goal was to fool the image recognition system in interesting and hilarious ways. For example, it did pretty terribly with waffles and bacon.

With a little more scripting, I was able to take snapshots of video frames from movies, upload them to S3, and perform image recognition.

For the nitty-gritty details of how the script works and/or to try it yourself, take a look at the Github project page. For the rest of you, here are some of the more interesting results…

One thing I learned is that quite a lot of movie scenes feature potted plants. For reals. I don’t think I’ll ever be able to watch a movie again without spotting the potted plants. But also, the Rekognition AI seems to have a weird fetish for potted plants. Sure, it legitimately finds them when they’re present. But it also thinks quite a few other things are them as well.

Background trees, I can see. Without a good sense of distance and scale, I can understand how these trees in Chappie might look like desk plants to an AI.

I can also see how this spiked buggy from Mad Max might also look like a potted plant, if you squinted enough and were hit on the head with a hammer repeatedly.

Overall, Mad Max did surprisingly well in the recognizer, with only a few minor hiccups like that one. It thought that TRON Legacy was all fancy stage lighting, computers, and (wha…?) American Football. But I do agree that the light cycles would make great Taxis. Just don’t get into an accident, they seem a little fragile.

But back to the plants — or lack thereof — this scene from Ghostbusters? Where’s the plant?

To its credit, it did a great job of sussing out that this scene is in a subway, with minimal evidence beyond two signs, tile walls, and an exit turnstyle.

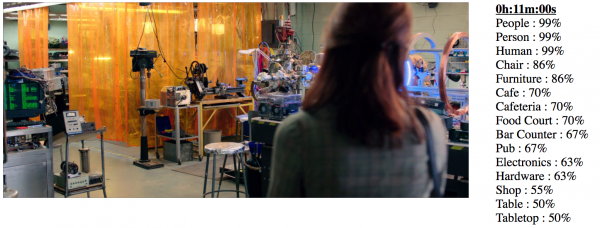

It didn’t do so well with this “food court.”

Although it did a great job spotting that one character was acting as a photographer, the night club and beverage were pretty far off — unless you count ectoplasm as a beverage.

What kind of creepy fish do you think are in these aquariums? Remind me to never buy them.

Rekognition picked up that the Ghostbusters wear military-like uniforms, but loses a few points for thinking this might be a confection factory.

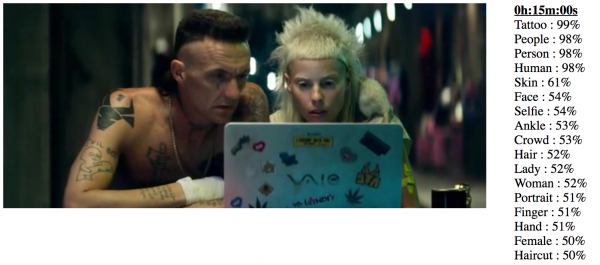

It did a surprisingly good job of recognizing that Ninja has tattoos in Chappie.

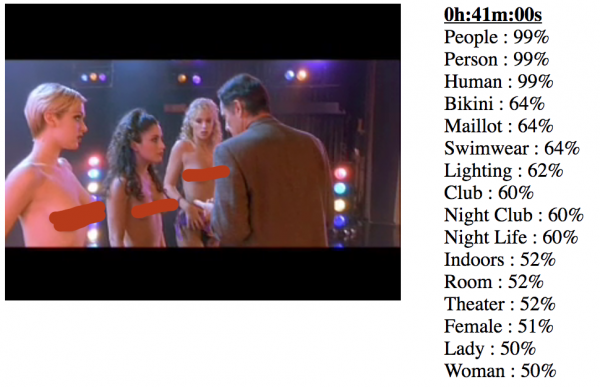

But it did a less-good job recognizing that the Showgirls are nude, and not simply wearing a bikini. Picture redacted, to pull us back from that NC-17 rating.

In Tron Legacy, it did a good job sensing Spandex, even if it thought it was worn by mannequins.

The AI has difficulty getting a fix on just what exactly Deadpool is. Is he some sort of footwear? A cowboy or riding boot, perhaps?

Wait, no. Everyone knows that Deadpool is a clown.

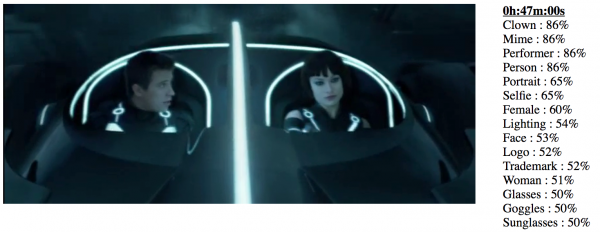

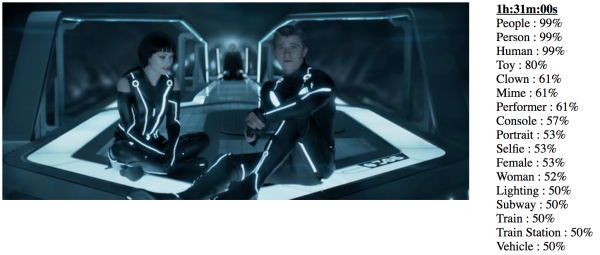

Speaking of clowns, the goth look of porcelain white skin and heavy eye makeup seems to trigger the “clown” and “mime” neurons, such as these scenes from TRON Legacy:

Let’s caramelize some food on the BBQ, perhaps take a nap, and move on.

Remember on Star Trek, errrr.. Galaxy Quest, when they carried around little E-Z-Bake ovens slung over their shoulders?

Don’t look now, the clowns and mimes are back.

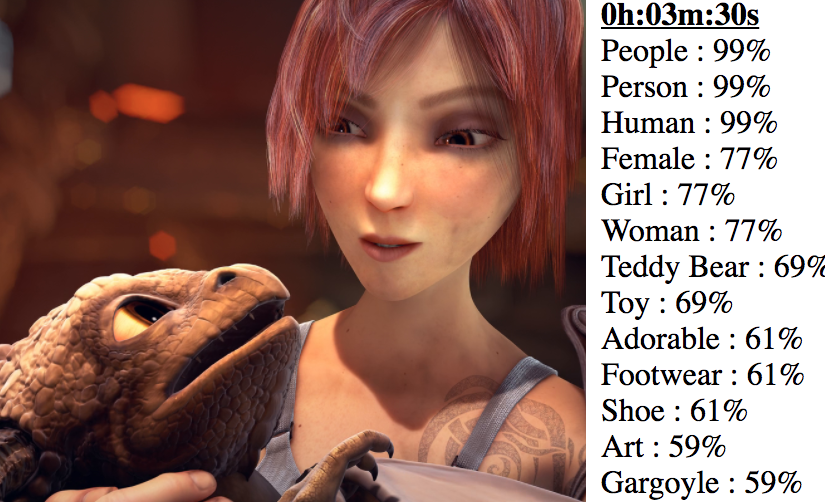

On the other hand, the AI did a really great job knowing that this is an alien who kind of resembles a dragon or gargoyle.

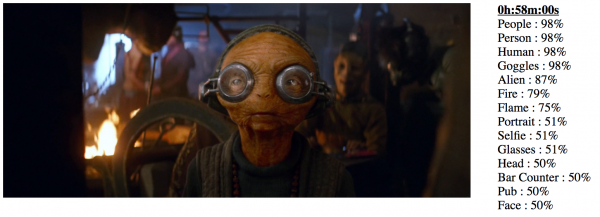

It also did a good job seeing that Maz from The Force Awakens is an alien wearing goggles.

Chewbacca is an ape? Sure. I don’t know what else you’d categorize him as.

It does a good job knowing that BB-8 and Chappie are robots.

It also believes Storm Troopers to be robots — something I was a little unsure of myself, back when I was a kid.

On the other hand, I suspect that even the slowest kid isn’t going to see the downed Imperial Star Destroyers as lace and mud. Can you imagine the size of that doily?

The Force Awakens opening credits are just one big brochure for the rest of the movie.

And in the Harry Potter opening credits, the AI thinks that someone left a birthday cake in the storm. Or maybe they released another sort of dessert in outer space?

Yes, yes. We all know that Dudley is a big baby.

It doesn’t understand Quidditch, but can successfully reason that this looks like a team.

Unfortunately, it’s a little less successful with Quidditch brooms, which all have an afro hairstyle.

In other confusion, it believes the people riding brooms around the air to be various species of bird. Are you a Seeker or a Vulture?

I thought this image was interesting. It doesn’t quite get that this is Gringotts Bank, instead thinking it is a shrine or temple (to capitalism!), but it does really like the flooring — focusing on the labyrinth-like texture.

And speaking of Labyrinth — there is a 98% chance that David Bowie is a woman.

Clowns, man. Clowns.

In conclusion, if you want to check out the raw data, I’ve made available the screenshots (at one minute intervals) and the corresponding recognized labels: Chappie, Deadpool, Galaxy Quest, Ghostbusters, Harry Potter, Labyrinth, Mad Max, Showgirls, Star Wars: The Force Awakens, TRON Legacy, UHF. And again, the script (and how to set it up) lives on Github as Video Rekognizer, if you want to try your own content.