About a month ago, I got a pair of security cameras for the house. It took a bit of research to arrive at the right ones. There are a lot of cameras on the market, and most of them are cheap things locked into a vendor ecosystem. It was a bit more expensive, but I settled on cameras with an open architecture. They’re open on three different fronts.

First, the hardware is standard. It’s just wired ethernet, fed by power-over-ethernet. It’s a little more industrial or “enterprise” than home hardware, but it’s a standard with a lot of support. Second, the protocols it speaks over ethernet are 100% documented standards of known protocols. You can talk to it over RTSP to activate a UDP stream. That video stream is just an h.264 encoding using the Main profile. There’s nothing proprietary about accessing the video from tools and scripts. Third, although its openness means there is quite a lot of 3rd-party software with it, the software it ships with does a great job and writes videos to disk as standard mp4 files.

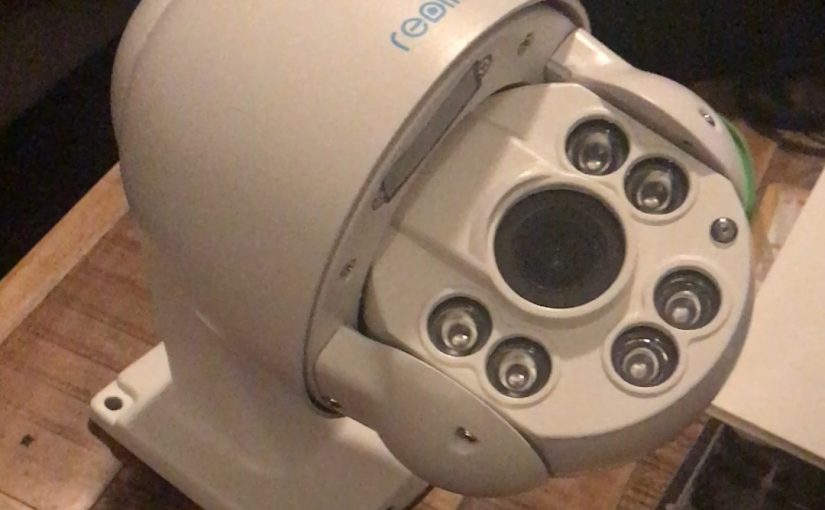

Specifically, the models I have are Reolink RLC-423 and RLC-422. The first one is fully pan/tilt/zoom controllable. You can even set up positional presets. I have three: one looking at the side doors, one that looks up the front walk, and one that zooms in really close to the bird feeder. The second camera is more simple. It’s a fixed position and only supports remote zoom and focus. Both have a large number of infrared LEDs that kick in after dark, acting as a flood lamp.

These are each connected to a Power-Over-Ethernet module. The ones I picked up are inexpensive, but do feel a little cheap compared to other network gear I’ve worked with.

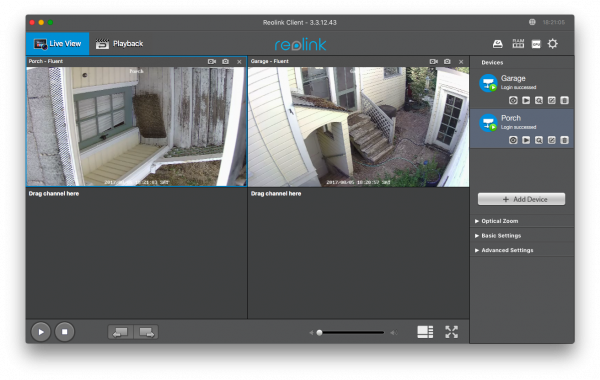

Further down the wire, these feed into my home network. I use DHCP to assign them known IP addresses, so that software can always find them quickly by numeric address. A laptop then runs the Reolink client software to monitor and control the cameras.

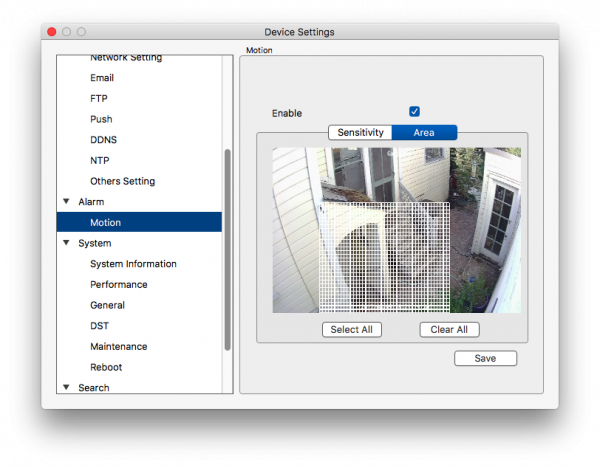

The software lets you define motion zones to monitor on a schedule:

The client software is always buffering the last 5 seconds of video, so when it detects motion, it records not only the motion itself, but 5 seconds before as well as after.

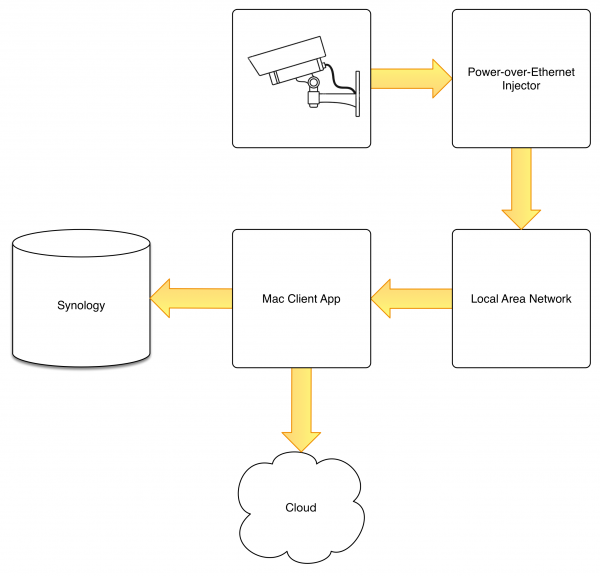

What I really want is for these video clips to end up on my Synology NAS for more robust storage, but I was hesitant to mount the NAS directly. I’ve been in situations where the mount gets screwey and things go wrong. Either the files don’t get written, or the mount point reconnects as a different name (such as /Volumes/Storage-1 instead of /Volumes/Storage) and they get written to a local folder with the old name of the mount point. For this, I set up the Cloud Station Drive client and server app. This works a little like Dropbox, but syncing only on the LAN to the NAS. I configured the video app to write to the local disk, then set up Cloud Station Drive to sync that set of video folders to the NAS. It seems to work pretty well. The Mac also pushes those files out to cloud storage, for off-site backup.

Overall, the architecture looks like this:

This allows for secure exfiltration of the video clips to other computers or devices, synchronized via an S3 bucket in the cloud. It very specifically prevents direct access to camera control and the live video feeds from the outside world. When I’m at home, on my own network, there’s an iOS app that lets me view and position the cameras, but no way to do that from outside the house’s network.

Has this setup secured the house? I don’t know. Mostly I end up with a ton of videos of my outdoor cat pacing around or chasing things. I also get confirmation about package delivery. The important thing is that I have the peace of mind that if something sneaky were to happen, I’d have video at home (well, assuming the sneakiness didn’t run off with my NAS), with a backup in the cloud. SQUIRREL!

I do like looking through the videos, to get a feel for what’s going on throughout the day and evening, but when you get anywhere between 50 and 300 video clips per day, ranging from 12 seconds to 30 seconds, that can be a lot of video to slog through.

In the next blog post, I’ll show you the video and AI processing I perform on the clips to help manage that review.